-

A threat actor named Jinkusu is selling AI tools that bypass KYC verification on financial platforms using real-time face swaps and voice changes.

-

The tool uses InsightFace for live video deepfakes and voice modulation to trick biometric checks at banks and crypto exchanges.

-

Binance’s security chief warned in 2023 that advancing AI could crack KYC systems using just one photo of a victim.

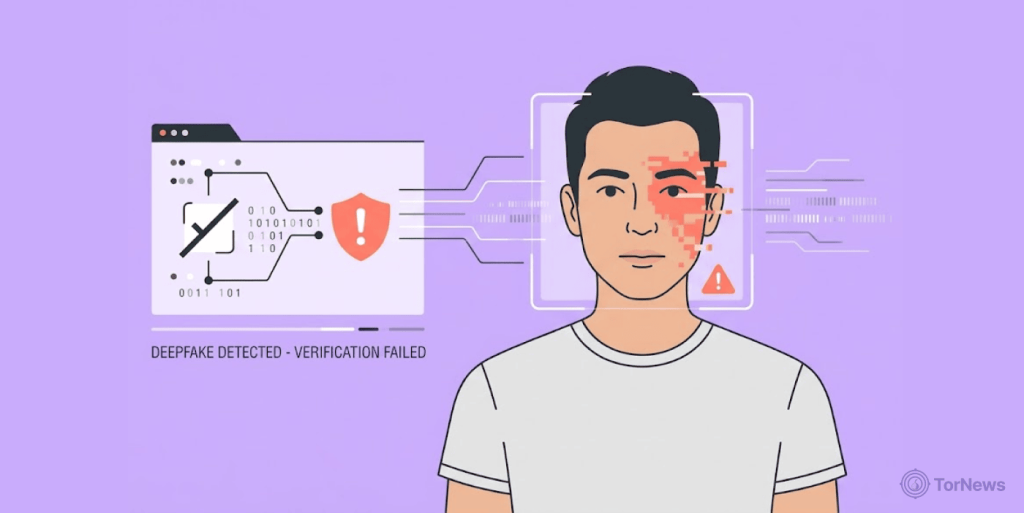

Deepfakes created using AI are becoming more common nowadays. With them, attackers can easily take up the identity of real people and cheat their way through job interviews, bank security checks, and even authorize wire transfers.

The most recent one making waves on darknet markets are AI deepfake tools that can break into bank and crypto accounts. These tools trick identity checks in real time, making fraud easier than ever.

Jinkusu Deepfake KYC Bypass Tools Targets Crypto and Banks

A cybercriminal known as “Jinkusu” is openly selling fraud kits on the darknet. These kits use artificial intelligence to fool know-your-customer (KYC) systems. Banks and crypto platforms rely on KYC to verify who you are. But now, scammers can slip right through the front door.

Cybercrime tracker Dark Web Informer broke the news on Sunday. The seller Jinkusu offers tools that mimic real faces and voices during live video checks. Security firm Vecert Analyzer looked under the hood and discovered real-time face swaps powered by the open-source AI model InsightFace.

This sophisticated AI fraud kit exists alongside a booming market for stolen real identities, UK identities are being sold on the dark web for just $30, proving that criminals don’t always need high-tech tools when cheap, stolen credentials are readily available.

The tool also changes voices using tech similar to ElevenLabs. All this runs fast enough to trick live calls. No technical skill needed.

Why This Scares Banks and Crypto Platforms

The danger here is very real. KYC systems ask you to show your ID or look into a camera. They check that you are a real person. But these deepfake tools create a fake person in real time.

The AI swaps faces smoothly. It matches gestures and lip movements. Voice modulation seals the deal. A scammer could pretend to be you, open an account in your name, and drain your funds.

As Dedy Raviv who runs a blockchain security firm called Cyvers, put it: “As AI lowers the barrier to entry for synthetic identity fraud, the front door of platforms always remains vulnerable.” He urges companies to stop relying on just one check. They need layers, like behavioral analysis and device fingerprinting, plus AI monitoring.

Back in May 2023, Binance’s Chief Security Officer Jimmy Su raised the alarm. He warned that improving AI algorithms would break through KYC with just a single photo of a victim. That prediction is now a reality. One stolen selfie could be all a thief needs.

Crypto Investors Lost $5.5B as New AI Powered Fraud Kit Fuel Romance Scams

This new kit does more than open fake accounts. It also fuels “pig butchering” romance scams. Scammers use it to build fake relationships online, then convince their victims to invest in phony crypto deals.

In 2024 alone, cryptocurrency investors lost around $5.5 billion in 200,000 big butchering scam cases. These are just the cases reported, there could be more losses.

Experts suspect Jinkusu is the same hacker who released the Starkiller phishing kit in February 2026. That kit was clever. Instead of fake login pages, it ran a real Chrome browser inside a hidden container.

A genuine login page of the target brand would appear, but the page will relay every input the user enters, both login credentials and password, to the hacker. Last year, 2025, the rate of crypto phishing losses dropped by 83%. However, wallet drainer scripts and AI malware haven’t reduced; they’re still very much active. Scam Sniffer reported that new threats keep emerging.

So what can you do? Use hardware wallets and enable 2FA (two -factor authentication). And always trust your instincts; if something doesn’t feel right about a video call, chances are high there’s something fishy. The face on screen might not be real.